Built for Real AI Workloads

Aivera.one operates purpose-built AI data center infrastructure designed to support modern machine learning, data processing, and inference at scale.

Every layer of the platform is engineered for performance, reliability, and long-term sustainability.

High-Performance Compute

Our infrastructure is optimized for AI-driven workloads, providing the computational power required for training and deploying advanced models.

- High-density GPU-based servers

- Optimized for parallel processing

- Scalable compute capacity as demand grows

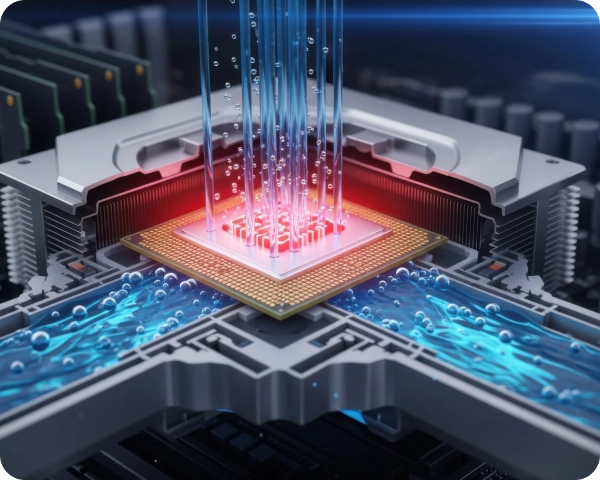

Advanced Cooling & Efficiency

AI systems generate significant heat. Aivera.one leverages modern cooling solutions to ensure performance stability and energy efficiency.

Advanced cooling architectures

Purpose-built cooling architectures regulate heat distribution across GPU-dense environments, reducing thermal stress while improving overall system efficiency.

Reduced energy waste

Energy-efficient infrastructure design reduces thermal and power inefficiencies across AI workloads, supporting sustainable operations.

Improved hardware lifespan and uptime

ptimized operating conditions help extend hardware lifespan while maintaining high availability for AI workloads.

Designed for Scalability

AI demand continues to grow — and so must the infrastructure that supports it.

Aivera.one uses a modular and expandable architecture that allows our data centers to scale alongside usage without disrupting existing operations.

High-performance AI systems generate significant heat. Aivera.one applies modern cooling architectures that maintain stable operating temperatures while reducing unnecessary energy usage.

The AI Infrastructure Challenge

Artificial intelligence is advancing rapidly, but the infrastructure required to support it faces significant limitations. These challenges restrict access, slow innovation, and concentrate power in the hands of a few.

High Cost Barriers

Building and maintaining AI-grade infrastructure requires substantial investment in specialized hardware, cooling systems, and energy resources.

For most individuals and small organizations, these costs make direct participation impossible.

Scalability Challenges

AI workloads grow quickly, demanding flexible and expandable systems.

Traditional infrastructure often struggles to scale efficiently, leading to bottlenecks, downtime, and performance limitations.

Centralization Risk

Most AI compute power is controlled by a small number of centralized providers.

This concentration reduces transparency, limits user control, and creates dependency on a few dominant platforms.

Limited Access

Access to high-performance AI infrastructure is restricted to large enterprises and well-funded institutions.

This leaves innovators, researchers, and independent developers with limited opportunities to build and experiment.